NVLink

Sep 06, 2024

Enhancing Application Portability and Compatibility across New Platforms Using NVIDIA Magnum IO NVSHMEM 3.0

NVSHMEM is a parallel programming interface that provides efficient and scalable communication for NVIDIA GPU clusters. Part of NVIDIA Magnum IO and based on...

7 MIN READ

Sep 05, 2024

Low Latency Inference Chapter 1: Up to 1.9x Higher Llama 3.1 Performance with Medusa on NVIDIA HGX H200 with NVLink Switch

As large language models (LLMs) continue to grow in size and complexity, multi-GPU compute is a must-have to deliver the low latency and high throughput that...

5 MIN READ

Aug 28, 2024

Boosting Llama 3.1 405B Performance up to 1.44x with NVIDIA TensorRT Model Optimizer on NVIDIA H200 GPUs

The Llama 3.1 405B large language model (LLM), developed by Meta, is an open-source community model that delivers state-of-the-art performance and supports a...

7 MIN READ

Aug 12, 2024

NVIDIA NVLink and NVIDIA NVSwitch Supercharge Large Language Model Inference

Large language models (LLM) are getting larger, increasing the amount of compute required to process inference requests. To meet real-time latency requirements...

8 MIN READ

Jun 12, 2024

NVIDIA Sets New Generative AI Performance and Scale Records in MLPerf Training v4.0

Generative AI models have a variety of uses, such as helping write computer code, crafting stories, composing music, generating images, producing videos, and...

11 MIN READ

Apr 03, 2024

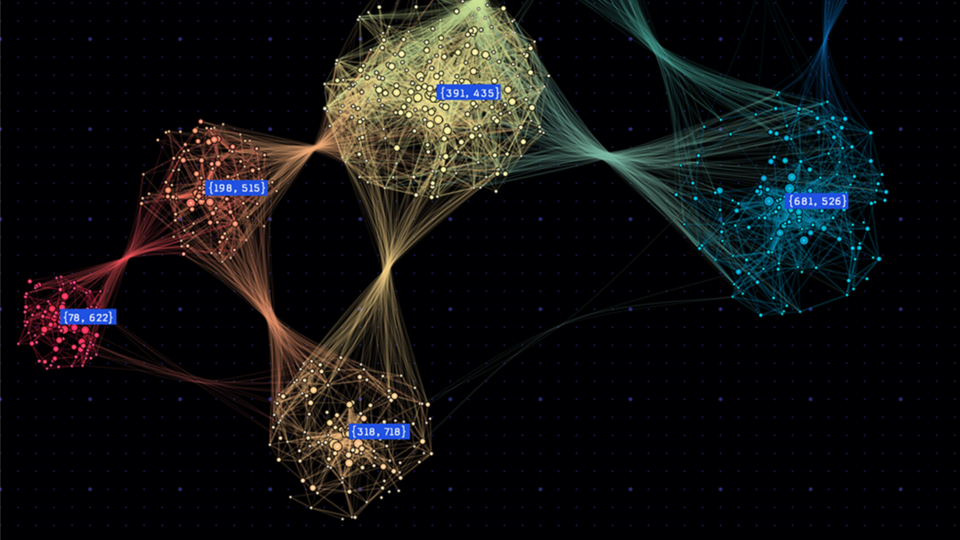

Optimizing Memory and Retrieval for Graph Neural Networks with WholeGraph, Part 2

Large-scale graph neural network (GNN) training presents formidable challenges, particularly concerning the scale and complexity of graph data. These challenges...

5 MIN READ

Mar 18, 2024

NVIDIA GB200 NVL72 Delivers Trillion-Parameter LLM Training and Real-Time Inference

What is the interest in trillion-parameter models? We know many of the use cases today and interest is growing due to the promise of an increased capacity for:...

9 MIN READ

Nov 28, 2023

One Giant Superchip for LLMs, Recommenders, and GNNs: Introducing NVIDIA GH200 NVL32

At AWS re:Invent 2023, AWS and NVIDIA announced that AWS will be the first cloud provider to offer NVIDIA GH200 Grace Hopper Superchips interconnected with...

9 MIN READ

Nov 08, 2023

Setting New Records at Data Center Scale Using NVIDIA H100 GPUs and NVIDIA Quantum-2 InfiniBand

Generative AI is rapidly transforming computing, unlocking new use cases and turbocharging existing ones. Large language models (LLMs), such as OpenAI’s GPT...

19 MIN READ

Oct 24, 2023

Reduce Apache Spark ML Compute Costs with New Algorithms in Spark RAPIDS ML Library

Spark RAPIDS ML is an open-source Python package enabling NVIDIA GPU acceleration of PySpark MLlib. It offers PySpark MLlib DataFrame API compatibility and...

8 MIN READ

Aug 29, 2023

Pioneering 5G OpenRAN Advancements with Accelerated Computing and NVIDIA Aerial

NVIDIA is driving fast-paced innovation in 5G software and hardware across the ecosystem with its OpenRAN-compatible 5G portfolio. Accelerated computing...

5 MIN READ

Jun 27, 2023

Breaking MLPerf Training Records with NVIDIA H100 GPUs

At the heart of the rapidly expanding set of AI-powered applications are powerful AI models. Before these models can be deployed, they must be trained through a...

15 MIN READ

May 28, 2023

Announcing NVIDIA DGX GH200: The First 100 Terabyte GPU Memory System

At COMPUTEX 2023, NVIDIA announced the NVIDIA DGX GH200, which marks another breakthrough in GPU-accelerated computing to power the most demanding giant AI...

6 MIN READ

Aug 23, 2022

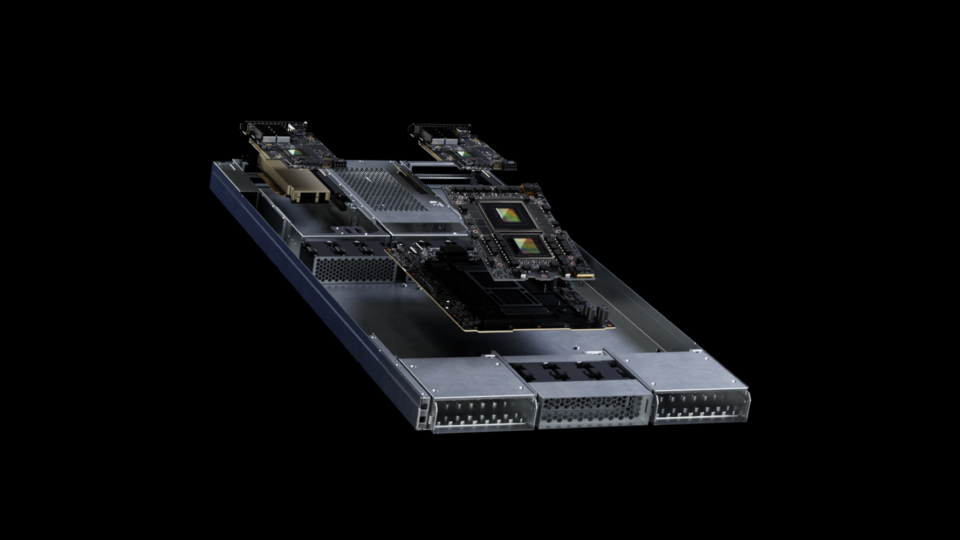

Upgrading Multi-GPU Interconnectivity with the Third-Generation NVIDIA NVSwitch

Increasing demands in AI and high-performance computing (HPC) are driving a need for faster, more scalable interconnects with high-speed communication between...

13 MIN READ

Jun 02, 2022

Fueling High-Performance Computing with Full-Stack Innovation

High-performance computing (HPC) has become the essential instrument of scientific discovery. Whether it is discovering new, life-saving drugs, battling...

8 MIN READ

Apr 12, 2021

Optimizing Data Movement in GPU Applications with the NVIDIA Magnum IO Developer Environment

Magnum IO is the collection of IO technologies from NVIDIA and Mellanox that make up the IO subsystem of the modern data center and enable applications at...

8 MIN READ